One cryptography expert said that ‘serious flaws’ in the way Samsung phones encrypt sensitive material, as revealed by academics, are ’embarrassingly bad.’

Samsung shipped an estimated 100 million smartphones with botched encryption, including models ranging from the 2017 Galaxy S8 on up to last year’s Galaxy S21.

Researchers at Tel Aviv University found what they called “severe” cryptographic design flaws that could have let attackers siphon the devices’ hardware-based cryptographic keys: keys that unlock the treasure trove of security-critical data that’s found in smartphones.

What’s more, cyber attackers could even exploit Samsung’s cryptographic missteps – since addressed in multiple CVEs – to downgrade a device’s security protocols. That would set up a phone to be vulnerable to future attacks: a practice known as IV (initialization vector) reuse attacks. IV reuse attacks screw with the encryption randomization that ensures that even if multiple messages with identical plaintext are encrypted, the generated corresponding ciphertexts will each be distinct.

Untrustworthy Implementation of TrustZone

In a paper (PDF) entitled “Trust Dies in Darkness: Shedding Light on Samsung’s TrustZone Keymaster Design” – written by by Alon Shakevsky, Eyal Ronen and Avishai Wool – the academics explain that nowadays, smartphones control data that includes sensitive messages, images and files; cryptographic key management; FIDO2 web authentication; digital rights management (DRM) data; data for mobile payment services such as Samsung Pay; and enterprise identity management.

The authors are due to give a detailed presentation of the vulnerabilities at the upcoming USENIX Security, 2022 symposium in August.

The design flaws primarily affect devices that use ARM’s TrustZone technology: the hardware support provided by ARM-based Android smartphones (which are the majority) for a Trusted Execution Environment (TEE) to implement security-sensitive functions.

TrustZone splits a phone into two portions, known as the Normal world (for running regular tasks, such as the Android OS) and the Secure world, which handles the security subsystem and where all sensitive resources reside. The Secure world is only accessible to trusted applications used for security-sensitive functions, including encryption.

Cryptography Experts Wince

Matthew Green, associate professor of computer science at the Johns Hopkins Information Security Institute, explained on Twitter that Samsung incorporated “serious flaws” in the way its phones encrypt key material in TrustZone, calling it “embarrassingly bad.”

“They used a single key and allowed IV re-use,” Green said.

“So they could have derived a different key-wrapping key for each key they protect,” he continued. “But instead Samsung basically doesn’t. Then they allow the app-layer code to pick encryption IVs.” The design decision allows for “trivial decryption,” he said.

So they could have derived a different key-wrapping key for each key they protect. But instead Samsung basically doesn’t. Then they allow the app-layer code to pick encryption IVs. This allows trivial decryption. pic.twitter.com/fGHoY0YoZF

— Matthew Green (@matthew_d_green) February 22, 2022

Paul Ducklin, principal research scientist for Sophos, called out Samsung coders for committing “a cardinal cryptographic sin.” Namely, “They used a proper encryption algorithm (in this case, AES-GCM) improperly,” he explained to Threatpost via email on Thursday.

“Loosely speaking, AES-GCM needs a fresh burst of securely chosen random data for every new encryption operation – that’s not just a ‘nice-to-have’ feature, it’s an algorithmic requirement. In internet standards language, it’s a MUST, not a SHOULD,” Ducklin emphasized. “That fresh-every-time randomness (12 bytes’ worth at least for the AES-GCM cipher mode) is known as a ‘nonce,’ short for Number Used Once – a jargon word that cryptographic programmers should treat as an *command*, not merely as a noun.”

Unfortunately, Samsung’s supposedly secure cryptographic code didn’t enforce that requirement, Ducklin explained. “Indeed, it allowed an app running outside the secure encryption hardware component not only to influence the nonces used inside it, but even to choose those nonces exactly, deliberately and malevolently, repeating them as often as the app’s creator wanted.”

By exploiting this loophole, the researchers were able to pull off a feat that’s “supposed to be impossible, or as close to impossible as possible,” he continued. Namely, the team were able to “extract cryptographic secrets from *inside* the secure hardware.”

So much for all the encryption security that the special hardware is supposed to enforce, Ducklin mused, as demonstrated by the researchers’ multiple proof-of-concept security bypass attacks.

Ducklin’s admonishment: “Simply put, when it comes to using proper encryption properly: Read The Full Manual!”

Flaws Enable Security Standards Bypass

The security flaws not only allow cybercriminals to steal cryptographic keys stored on the device: They also let attackers bypass security standards such as FIDO2.

According to The Register, as of the researchers’ disclosure of the flaws to Samsung in May 2021, nearly 100 million Samsung Galaxy phones were jeopardized. Threatpost has reached out to Samsung to verify that estimate.

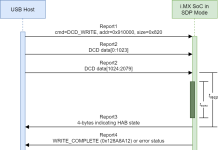

Samsung responded to the academics’ disclosure by issuing a patch for affected devices that addressed CVE-2021-25444: an IV reuse vulnerability in the Keymaster Trusted Application (TA) that runs in the TrustZone. Keymaster TA carries out cryptographic operations in the Secure world via hardware, including a cryptographic engine. The Keymaster TA uses blobs, which are keys “wrapped” (encrypted) via AES-GCM. The vulnerability allowed for decryption of custom key blobs.

Then, in July 2021, the researchers revealed a downgrade attack – one that lets attacker trigger IV reuse vulnerability with privileged process. Samsung issued another patch – to address CVE-2021-25490 – that remoged the legacy blob implementation from devices including Samsung’s Galaxy S10, S20 and S21 phones.

The Problem with Designing in the Dark

It’s not just a problem with how Samsung implemented encryption, the researchers said. These problems arise from vendors – they called out Samsung and Qualcomm – keeping their cryptography designs close to the vest, the Tel Aviv U. team asserted.

“Vendors including Samsung and Qualcomm maintain secrecy around their implementation and design of TZOSs and TAs,” they wrote in their paper’s conclusion.

“As we have shown, there are dangerous pitfalls when dealing with cryptographic systems. The design and implementation details should be well audited and reviewed by independent researchers and should not rely on the difficulty of reverse engineering proprietary systems.”

‘No Security in Obscurity’

Mike Parkin, senior technical engineer at enterprise cyber risk remediation SaaS provider Vulcan Cyber, told Threatpost on Wednesday that getting cryptography right isn’t exactly child’s play. It’s ” a non-trivial challenge,” he said via email. “It is by nature complex and the number of people who can do proper analysis, true experts in the field, is limited.

Parkin understands the reasons cryptologists push for open standards and transparency on how algorithms are designed and implemented, he said: “A properly designed and implemented encryption scheme relies on the keys and remains secure even if an attacker knows the math and how it was coded, as long as they don’t have the key.”

The adage “there is no security in obscurity” applies here, he said, noting that the researchers were able to reverse engineer Samsung’s implementation and identify the flaws. “If university researchers could do this, it is certain that well-funded State, State sponsored, and large criminal organizations can do it too,” Parkin said.

John Bambenek, principal threat hunter at the digital IT and security operations company Netenrich, joins Parkin on the “open it up” side. “Proprietary and closed encryption design has always been a case study in failure,” he noted via email on Wednesday, referring to the “wide range of human rights abuses enabled by cell phone compromises,” such as those perpetrated with the notorious Pegasus spyware.

“Manufacturers should be more transparent and allow for independent review,” Bambenek said.

While most users have little to worry about with these (since-patched) flaws, they “could be weaponized against individuals who are subject to state-level persecution, and it could perhaps be utilized by stalkerware,” he added.

Eugene Kolodenker, staff security intelligence engineer at endpoint-to-cloud security company Lookout, agreed that best practice dictates designing security systems “under the assumption that the design and implementation of the system will be reverse-engineered.”

The same goes for the risk of it being disclosed or even leaked, he commented via email to Threatpost.

He cited an example: AES, which is the US standard of cryptography and accepted for top-secret information, is an open specification. “This means that the implementation of it is not kept secret, which has allowed for rigorous research, verification, and validation over the past 20 years,” Kolodenker said.

Still, AES comes with many challenges, he granted, and “is often done incorrectly.”

He thinks that Samsung’s choice to use AES was a good decision. Unfortunately, the company “did not fully understand how to do so properly.”

An audit of the whole system “might have prevented this problem,” Kolodenker hypothesized.

022422 10:15 UPDATE: Added input from Sophos’ Paul Ducklin.

Check out our free upcoming live and on-demand online town halls – unique, dynamic discussions with cybersecurity experts and the Threatpost community.